Microsoft seem to be big fans of the GAC - which is fine for them as the platform provider, but personally I prefer to keep my code away from the GAC as far as possible. A few pet reasons, including some still-healing wounds from COM/COM+ (dll hell), and the fact that most of what I write needs to get deployed to a server-farm by tools like "robocopy", which is not the friend of GAC.

This difference raised its head recently when I was trying to get the VS2008 tooling working for protobuf-net. The way it works is that as part of the VS registration (which is done in a VS-specific registry hive) you tell it how to get your package - and a valid choice here is to specify the codebase. This is the option I took, since it introduces no extra configuration details, other than the mandatory VS hooks.

So that is fine and dandy, dandy and fine. But problems soon happened. In particular; since I already had dlls for protobuf-net and protogen, I wanted to ship those in the install directory, and have my VS package simply reference them. Which turned out to be not-so-simple...

Assembly resolution

The first thing to break down was assembly resolution; my package would simply refuse to find the dlls. Because of the different paths etc, your package's isntall directory is pretty unimportant to VS. If your required dlls are in the GAC, then it will find it - but I really didn't want to do that. But there are other tricks...

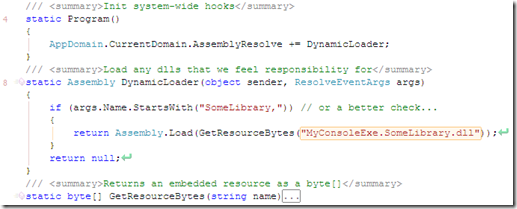

A little-known feature in .NET is that there is an event (AppDomain.AssemblyResolve) that fires when it can't find an assembly. By handling this event we can load it manually (for example: from the file system, download-on-demand, or from an embedded resource):

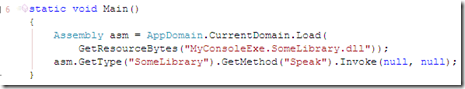

This works fine in most applications, but not VS; this event simply never fires within a VS package. Oh well. Another feasible option is to use reflection to load the dll (probably acceptable if the API is very shallow):

Not pretty, but it'll work - except now you just move the problem downstream; any dlls that our library needs will be missing too, with all the same problems plus some more issues associated with the "load context" (depending also on the whole LoadFile / LoadFrom choice)... oh dear.

Processes vs AppDomains

Our original problem was that files that existed locally weren't being resolved due to the assembly resolution paths of VS; but what if the second assembly was actually an exe? In the case of protogen.exe this was fine. So we could launch a new Process (setting the working path, etc); not elegant, but very effective - in particular it bypasses all the issues of the "load context" inherited from VS; we have our own process, thanks very much.

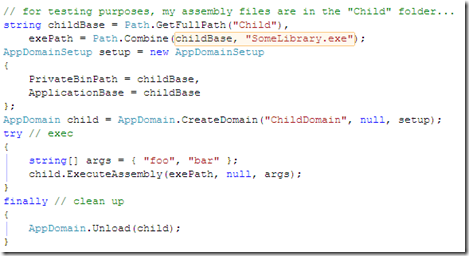

But there is an alternative... a second AppDomain; this lives within the same process, but is isolated (in terms of assemblies, configuration, etc), and can be unloaded. And neatly, you can execute an assemblies entry-point very easily (conveniently passing arguments as a string[] rather than having to pack them down as a single string for Process):

This is actually quite a cute way of launching a sub-process (for .NET code).

Processes within AppDomains within Packages

As it happens, in my case my child dll itself has to launch a Process during execution ("protoc.exe"); normally this is fine, but VS was soon causing problems again; for some curious reason (presumably linked to security evidence), when using ExecuteAssembly this inner Process.Start would always flash a console frame (even when told not to). Oddly, if I instead launch a Process from my Package, and that Process launches a Process, then this doesn't happen. A bit of an undesirable quirk, but as a consequence this multi-level Process tree is currently how the VS package does its work.

But in general you can use ExecuteAssembly as a neat alternative to a Process.

Summary

VS packages have "fun" written all over them... maybe my borked installer was right all along ;-p