AUTH concept, but frankly it is designed to deter casual access: all commands and data remain unencrypted and visible in the protocol, including any AUTH requests themselves. What we probably want, then, is some kind of transport level security.Now, redis itself does not provide this; there is no standard encryption, but you could configure a secure tunnel to the server using something like stunnel. This works, but requires configuration at both client and server. But to make our lives a bit easier, some of the redis hosting providers are beginning to offer encrypted redis access as a supported option. This certainly applies both to Microsoft “Azure Redis Cache” and Redis Labs “redis cloud”. I’m going to walk through both of these, discussing their implementations, and showing how we can connect.

Creating a Microsoft “Azure Redis Cache” instance

First, we need a new redis instance, which you can provision at https://portal.azure.com by clicking on “NEW”, “Everything”, “Redis Cache”, “Create”:

There are different sizes of server available; they are all currently free during the preview, and I’m going to go with a “STANDARD” / 250MB:

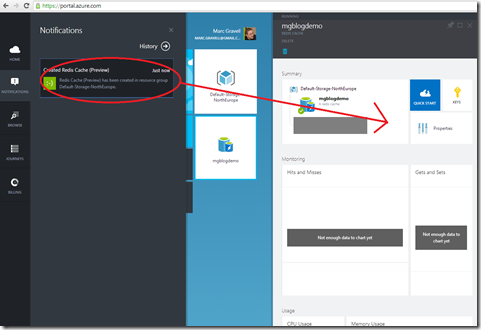

Azure will now go away and start creating your instance:

This could take a few minutes (actually, it takes surprisingly long IMO, considering that starting a redis process is virtually instantaneous; but for all I know it is running on a dedicated VM for isolation etc; and either way, it is quicker and easier than provisioning a server from scratch). After a while, it should become ready:

Connecting to a Microsoft “Azure Redis Cache” instance

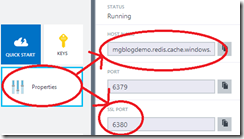

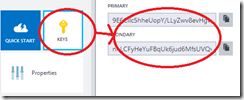

We have our instance; lets talk to it. Azure Redis Cache uses a server-side certificate chain that should be valid without having to configure anything, and uses a client-side password (not a client certificate), so all we need to know is the host address, port, and key. These are all readily available in the portal:

Normally you wouldn’t post these on the internet, but I’m going to delete the instance before I publish, so; meh. You’ll notice that there are two ports: we only want to use the SSL port. You also want either stunnel, or a client library that can talk SSL; I strongly suggest that the latter is easier! So;

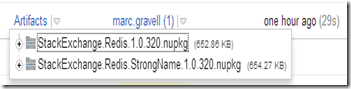

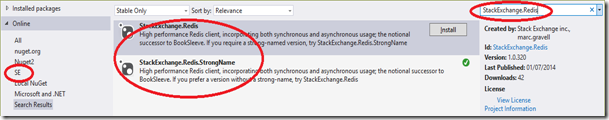

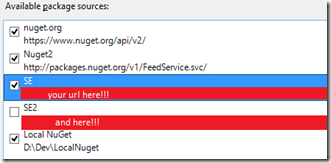

Install-Package StackExchange.Redis, and you’re sorted (or Install-Package StackExchange.Redis.StrongName if you are still a casualty of the strong name war). The configuration can be set either as a single configuration string, or via properties on an object model; I’ll use a single string for convenience – and my string is:mgblogdemo.redis.cache.windows.net,ssl=true,password=LLyZwv8evHgveA8hnS1iFyMnZ1A=

The first part is the host name without a port; the middle part enables ssl, and the final part is either of our keys (the primary in my case, for no particular reason). Note that if no port is specified,

StackExchange.Redis will select 6379 if ssl is disabled, and 6380 if ssl is enabled. There is no official convention on this, and 6380 is not an official “ssl redis” port, but: it works. You could also explicitly specify the ssl port (6380) using standard {host}:{port} syntax. With that in place, we can access redis (an overview of the library API is available here; the redis API is on http://redis.io)var muxer = ConnectionMultiplexer.Connect(configString);

var db = muxer.GetDatabase();

db.KeyDelete("foo");

db.StringIncrement("foo");

db.StringIncrement("foo");

db.StringIncrement("foo");

int i = (int)db.StringGet("foo");

Console.WriteLine(i); // 3

and there we are; readily talking to an Azure Redis Cache instance over SSL.

Creating a new Redis Labs “redis cloud” instance and configuring the certificates

Another option is Redis Labs; they too have an SSL offering, although it makes some different implementation choices. Fortunately, the same client can connect to both, giving you flexibility. Note: the SSL feature of Redis Labs is not available just through the UI yet, as they are still gauging uptake etc. But it exists and works, and is available upon request; here’s how:

Once you have logged in to Redis Labs, you should immediately have a simple option to create a new redis instance:

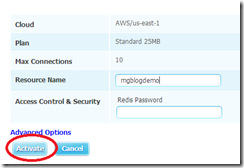

Like Azure, a range of different levels is available; I’m using the Free option, purely for demo purposes:

We’ll keep the config simple:

and wait for it to provision:

(note; this only takes a few moments)

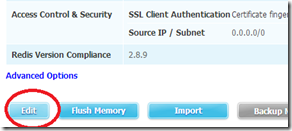

Don’t add anything to this DB yet, as it will probably get nuked in a moment! Now we need to contact Redis Labs; the best option here is support@redislabs.com; make sure you tell them who you are, your subscription number (blanked out in the image above), and that you want to try their SSL offering. At some point in that dialogue, a switch gets flipped, or a dial cranked, and the Access Control & Security changes from password:

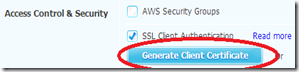

to SSL; click edit:

and now we get many more options, including the option to generate a new client certificate:

Clicking this button will cause a zip file to be downloaded, which has the keys to the kingdom:

The pem file is the certificate authority; the crt and key files are the client key. They are not in the most convenient format for .NET code like this, so we need to tweak them a bit; openssl makes this fairly easy:

c:\OpenSSL-Win64\bin\openssl pkcs12 -inkey garantia_user_private.key -in garantia_user.crt -export -out redislabs.pfx

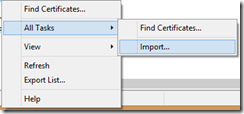

This converts the 2 parts of the user key into a pfx, which .NET is much happier with. The pem can be imported directly by running certmgr.msc (note: if you don’t want to install the CA, there is another option, see below):

Note that it doesn’t appear in any of the pre-defined lists, so you will need to select “All Files (*.*)”:

After the prompts, it should import:

So now we have a physical pfx for the client certificate, and the server’s CA is known; we should be good to go!

Connecting to a Redis Labs “redis cloud” instance

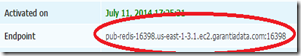

Back on the Redis Labs site, select your subscription, and note the Endpoint:

We need a little bit more code to connect than we did with Azure, because we need to tell it which certificate to load; the configuration object model has events that mimic the callback methods on the

SslStream constructor:var options = new ConfigurationOptions();

options.EndPoints.Add(

"pub-redis-16398.us-east-1-3.1.ec2.garantiadata.com:16398");

options.Ssl = true;

options.CertificateSelection += delegate {

return new System.Security.Cryptography.X509Certificates.X509Certificate2(

@"C:\redislabs_creds\redislabs.pfx", "");

};

var muxer = ConnectionMultiplexer.Connect(options);

var db = muxer.GetDatabase();

db.KeyDelete("foo");

db.StringIncrement("foo");

db.StringIncrement("foo");

db.StringIncrement("foo");

int i = (int)db.StringGet("foo");

Console.WriteLine(i); // 3

Which is the same smoke test we did for Azure. If you don’t want to import the CA certificate, you could also use the

CertificateValidation event to provide custom certificate checks (return true if you trust it, false if you don’t).Way way way tl:dr;

Cloud host providers are happy to let you use redis, and happy to provide SSL support so you can do it without being negligent.

StackExchange.Redis has hooks to let this work with the two SSL-based providers that I know of.