Target audience: library authors who want to get into this “dnx” thing.

Unless you have been asleep at the wheel, you probably know that Microsoft have been working really really hard at moving forward with the “corefx” / “core-clr” / “dnx” / “asp.net 5” stream of work (all broadly related and often used interchangeably, whether correctly or incorrectly) – their effort to make .net a truly open-source, cross-platform open technology. An awesome set of aims. A few days ago saw Release Candidate 1, and it is now becoming very capable.

Any platform is only as rich as the ecosystem – the libraries available for it. In the case of core-clr (which, right or wrong, I will now use to mean the set of things mentioned above), this is a combination of the framework libraries (which are now open source, this is the “corefx” piece), but also the third party community libraries – which often, but not exclusively, means nuget.

It is my judgement that there are a large number of library authors and contributors who want to start exploring the tools, but find the current state confusing and overwhelming. My aim here, then, is to try to demystify what you need to do. I’m going to assume that you already have some .net libraries that you want to migrate to core-clr. I’m drawing on the involvement I’ve had working on the core-clr conversions of Dapper, protobuf-net, Sigil, Jil (all now available for core-clr on nuget), SimpleSpeedTester (PR not yet taken), and StackExchange.Redis (I’m still working through a big PR kindly contributed by some awesome Microsoft folks). Topics I hope to cover:

- the tools of the trade – what you need to get started, what each does, and where to find things

- our sample project: FastMember

- say hello to project.json and package structure

- understanding target platforms / monikers

- more on package management

- changing your code to fit the platform; what is going to hurt?

- testing your code

- packaging and deployment

Caveat emptor

All of these things are evolving; I hope it is all correct at the moment (rc1), but many details may have subtle changes by rtm and beyond. Such is the life of the software developer. Expect your cheese to be moved.

The tools of the trade

In the past, the .net framework has been huge system-wide installs of the entire framework library, with many upgrades over-the-top (meaning: once you’ve installed 4.6.1 or whatever: all 4.6 apps get those changes). In dnx, everything is much more granular; this makes for a much better upgrade cadence – System.Some.Component has changes they want to get out? Sure thing: they just deploy it to nuget, and you pick it up when you choose. The tool-chain is very different, and you need some new pieces; so… let’s go get them.

dnvm

The first thing you need is “dnvm” – the “.NET Version Manager”. This tool is in charge of installing and managing as many different runtimes as you can like, including cross-targeting reference runtimes. Essentially, when people talk about “1.0.0-rc1-final” (the current release), that is the runtime version. You can install this for windows, mac or linux. If you’re using Visual Studio, be sure to install the appropriate bits (look just above “runtime and tooling”). In particular: don’t get confused by the fact that the page is talking about “ASP.NET 5”. Even if you are a pure library author with no interest in ASP.NET, this is the right stuff. I told you the names were largely interchangeable!

So what does dnvm do? Once it is installed, the first thing you want to do is “dnvm update-self” (to ensure you have the latest dnvm tooling), then “dnvm upgrade”, to update to the latest runtime.

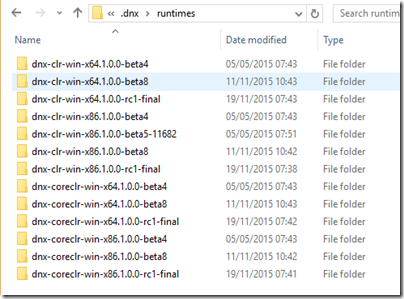

dnvm is basically a tool that manages and switches between any number of runtimes, where runtimes are just folders under %USERPROFILE%/.dnx/runtimes; here are mine:

which is exactly what I get if I type “dnvm list”

If I decide I don’t need those beta 4-8 bits, I can just delete the folders any way I choose, and type “dnvm list” again:

(I could also use “dnvm uninstall” for this)

I can install additional runtimes with “dnvm install”, and I can give runtimes aliases – for example, in my examples I’ve added “c64” to mean “rc1 coreclr on x64 targeting windows”. I did this with:

dnvm alias c64 1.0.0-rc1-final -r coreclr -a x64”

(and likewise for the others) I can now switch my command-line tools between runtimes by entering “dnvm use c64” or “dnvm use n86”. Note that a runtime comprises not just the core pieces to make .net work at all, but also includes per runtime our other main tools - “dnu” and “dnx”.

dnu

Our next tool is “dnu”, the “Microsoft .NET Development Utility”. This acts as a wrapper including:

- package management (for obtaining and managing our dependencies)

- build tools (the compiler)

- packaging and deployment tools (think: “nuget pack”)

To see this tool in action we really need to have a project, but perhaps the most important thing to remember about a lot of what it does is: %USERPROFILE%/.dnx/packages. In the same way that dnvm owns /runtimes, dnu owns /packages – the local cache of dependencies we have on our local machine.

dnx

The last of our tools is “dnx”, the “Microsoft .NET Execution environment”; basically, it runs stuff! There are ways of bootstrapping things, but for most dev purposes, dnx is your friend (unless you’re using an IDE to do the same thing).

Again, we can’t really show dnx doing much without a project, so we’ll come back to it.

All of these are command-line tools; almost everything can also be done in the IDE (with the right tools); but it is worth understanding what is going on.

What is FastMember?

Frankly, it is a little project I wrote ages ago and haven’t changed in ages (I didn’t even migrate it from google-code until recently). In fact, I even lost the snk password and had to break the identity (well damn, that’s embarrassing). What it does isn’t particularly important – just that it is an existing real-world library that I want to move to core-clr (one of the things it does very nicely is allow you to expose an IEnumerable<T> as an IDataReader for SqlBulkCopy). It does a few non-trivial things, but we’ll burn that bridge when we get to it.

Say hello project.json

Foreword:

This is actually one of the hardest bits. Once you have the project structure working, most other things are relatively easy! This step is awkward for existing projects. It would be nice if the tools made this a little less messy.

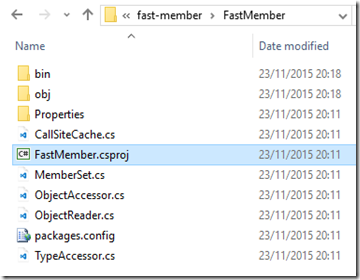

You may have heard mention of project.json; this is the new format that can be used as an alternative to a csproj file. It is clean, human maintainable, and relatively versatile. So how do we get one? There is a way to do this with “dnu wrap”, but I’m not personally very satisfied with the hybrid beast that results from that – I’m going to focus instead on a complete transition to core-clr tooling. The good news is that a project.json file is very simple – and it is opinionated, with a lot of assumptions made implicitly (like: “include all the .cs files in the sub-tree”). One of the opinions it currently holds very strongly is that the folder name defines identity. So since I want my package to be FastMember, it needs to be in a folder called FastMember. This fits my existing file structure, except currently my .csproj is also in the FastMember folder:

This is actually slightly problematic, and is a current pain point – because project.json and csproj do not play nicely in the same folder. While transitioning (as in: while you wait for the tools to stabilise so that the entire team are familiar with working in dnx), you probably want to have both csproj and project.json builds side-by-side. Your mileage may vary, but what has worked best for me is to move the csproj.

You should apply your own thoughts, but since a csproj targets a single framework (and a project.json doesn’t), I’m using a _Net40, _Net35 etc suffix for each of my csproj folders, with the project.json going into the main folder. So for each of my projects I’m going to:

- Relocate the csproj file (and packages.config) into FastMember_Net40 (the original FastMember/FastMember.csproj targets .net 4.0) – don’t worry about multi-targeting – that will be covered later

- Manually edit the csproj to pick up code files from the existing location – there’s a trick you can do here with the same “everything under the sub-tree” approach; basically, from the new location I can tell it it to include “..\FastMember\**\*.cs”

- Create a minimal project.json; for starters, “{ }” will let us at least get to the next step

And:

- Rename the FastMember_Signed folder to FastMember.Signed, because the nuget package is called FastMember.Signed

- Update the existing sln with the new csproj project locations

- Create a new sln that has the project.json projects

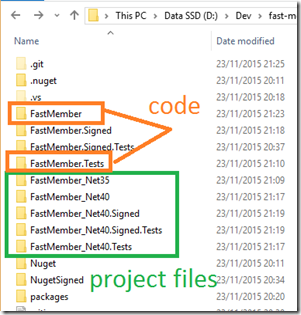

Here’s the outcome:

The _Net35, _Net40 etc just contain the various csproj files for different builds. My actual code is in the FastMember and FastMember.Tests folders, so that I can move the project.json into each. I split this into two commits – one that refactored the existing csproj / sln, and one that created the project.json and corresponding sln.

IMPORTANT: as soon as you add a project.json to a sln you get a second file per project.json for free: {YourProject}.xproj; these should be included in source control.

IMPORTANT: as soon as you try to build (which first invokes package restore), or explicitly run “dnu restore” – you get a project.lock.json file per project.json; this is an internal tracking file and does not need to be included in source control.

So after this, I have:

- actual code (.cs) in FastMember and FastMember.Tests

- a project.json (and lock file, and xproj) in FastMember, FastMember.Tests, FastMember.Signed, FastMember.Signed.Tests (note that the .Signed ones will be identical once complete, but with strong names included) – linked by FastMember.DNX.sln

- csproj / packages.config in each of the _Net35 / Net40 folders – linked by FastMember.sln

Now, after all that, I can load *either* of the two solutions.

At this point, my project.json is just a dummy “{ }”, but we can fill it out; this bit is alarmingly simple to get a minimal build that mirrors the existing .net (pre core-clr) setup:

- FastMember and FastMember.Signed should each target .net 3.5 and .net 4.0

- they all need to reference System.Data from the BCL

- the 3.5 build needs to define NO_DYNAMIC to compile without “dynamic”

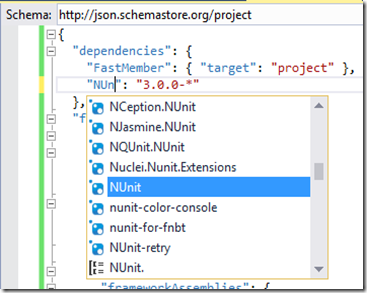

- the two test projects should reference NUnit 3 and their corresponding main project file

- the .Signed versions should use the SNK, and need to obtain their .cs files from the parallel non-signed versions

The project.json for the above is very simple; and if you use Visual Studio the IDE will automatically prompt you in all the right places (for other editors, the schema is published here). Note that BCL references come under “frameworkAssemblies”, where as packages from our package manager come under “dependencies”. One really nice thing the Visual Studio tools do for us here is auto-completion on package sources – on both names and versions:

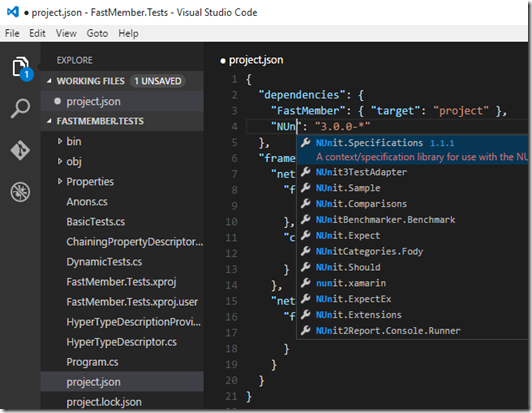

A quick shout out to “Visual Studio Code”: it should be noted that the folder-based approach used by core-clr works very well in this cut down (but fast and well-featured) editor. And for extra awesome, it includes all these same abilities (just by typing “code .” from the command-line in the folder of choice):

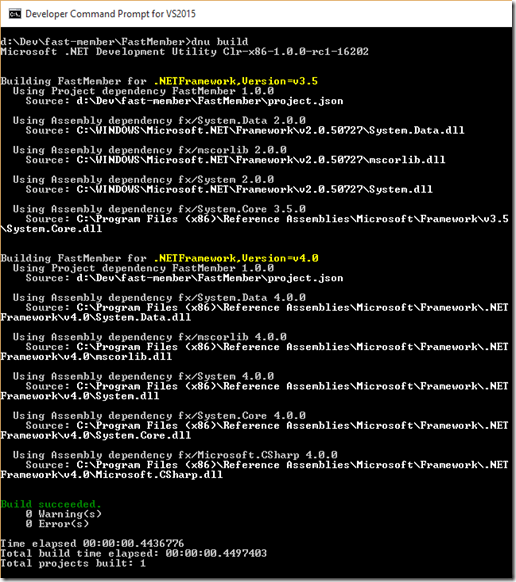

In addition to building in Visual Studio, we can also build at the command line (after running “dnu restore”) by using “dnu build” or “dnu build --configuration release” in any of the folders with a project.json:

As you can see, building a single project.json can build for multiple targets – in this case .NET 3.5 and .NET 4.0. This results in the usual dll, pdb and xml outputs under bin/debug/net35 and bin/debug/net40 – or bin/release/net35 and bin/release/net40 if we specified release. So far, so good.

End of Part 1

At this point, you would be well within your rights to be underwhelmed. We’ve taken quite a bit of effort to get back to exactly where we started from: a project we can build that targets .net 3.5 and .net 4.0 and can be compiled to binaries - but using a project.json instead of a csproj. Everything so far has been just tooling changes. In part 2, we’ll get into what this enables. It goes uphill from here, honest! See you soon.